Have you ever scrolled through your Facebook feed and accidentally came across a misinformed article from a source you’ve never heard of that was shared by a school mate you haven’t talked to in years? Because I certainly have. Well, Facebook has announced that it would be harder for users to choose to share misinformation.

“We’ve taken stronger action against Pages, Groups, Instagram accounts and domains sharing misinformation and now, we’re expanding some of these efforts to include penalties for individual Facebook accounts too,” wrote Facebook.

While Facebook says that they already have reduced a single post’s reach in the news Feed if it has already been debunked, they aim to up the ante. Starting today, the social media platform will start to “reduce distribution of all posts in news feed” from a user’s account if they are have repeatedly shared misinformed content.

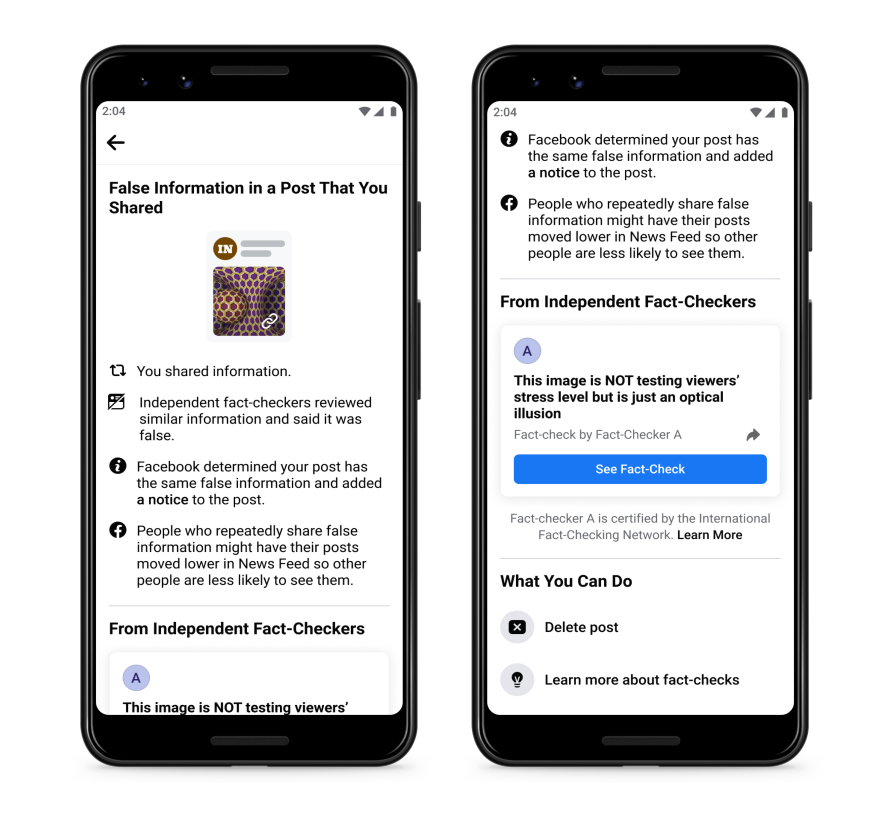

Users would also receive notifications to inform them that they have shared false information. The notification would also include the fact-checker’s article debunking the claim as well as a prompt to share that article with their followers.

The notice also explains to users that people who repeatedly share false information “might have their posts moved lower in news feed so other people are less likely to see them”. In addition, users would be able to delete the post and learn more about fact-checks under their “what you can do” section.

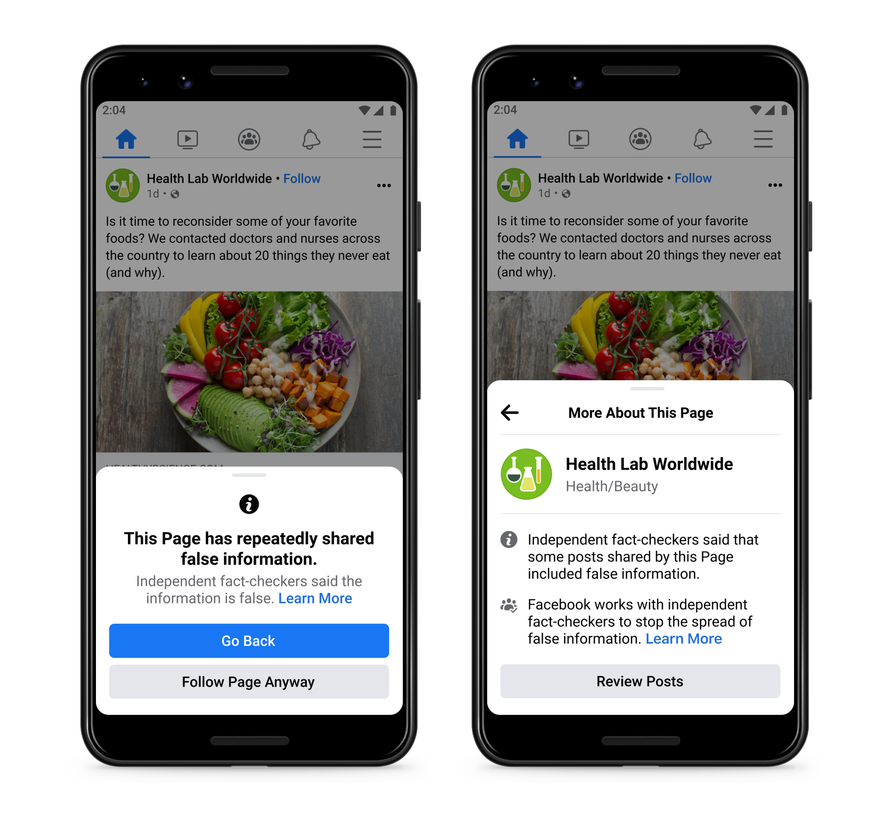

But it’s not just users that would need to be more careful with sharing suspicious articles on their feed, as Facebook pages are also responsible for any potential spread of misinformation. If a page has repeatedly shared content that fact-checkers have rated, users will see a pop up to help them make an informed decision about whether or not they want to follow the page.

While it’s commendable that they seem to be taking some initiative in tackling the spread of misinformation, there’s still a lot of questions that could come out of it. How fast can their fact-checkers do their job if misinformed sites keep churning out their content? And how many posts it would take to trigger the reduction in the news feed?

Spreading dangerous false claims about COVID-19 and vaccines is also a huge problem, so why can’t Facebook just block them out instead of just limited their spread? Twitter seems to have no problem banning spreaders of fake news like Donald Trump—although they have also been deleting content Palestinian residents due to “technical errors“.

Facebook has also been giving people “more context” about the pages they see on the platform by labelling Pages. The Page labels include ‘public official’, ‘fan page’ or ‘satirical page’—which will give users a better understanding of where their information is coming from.