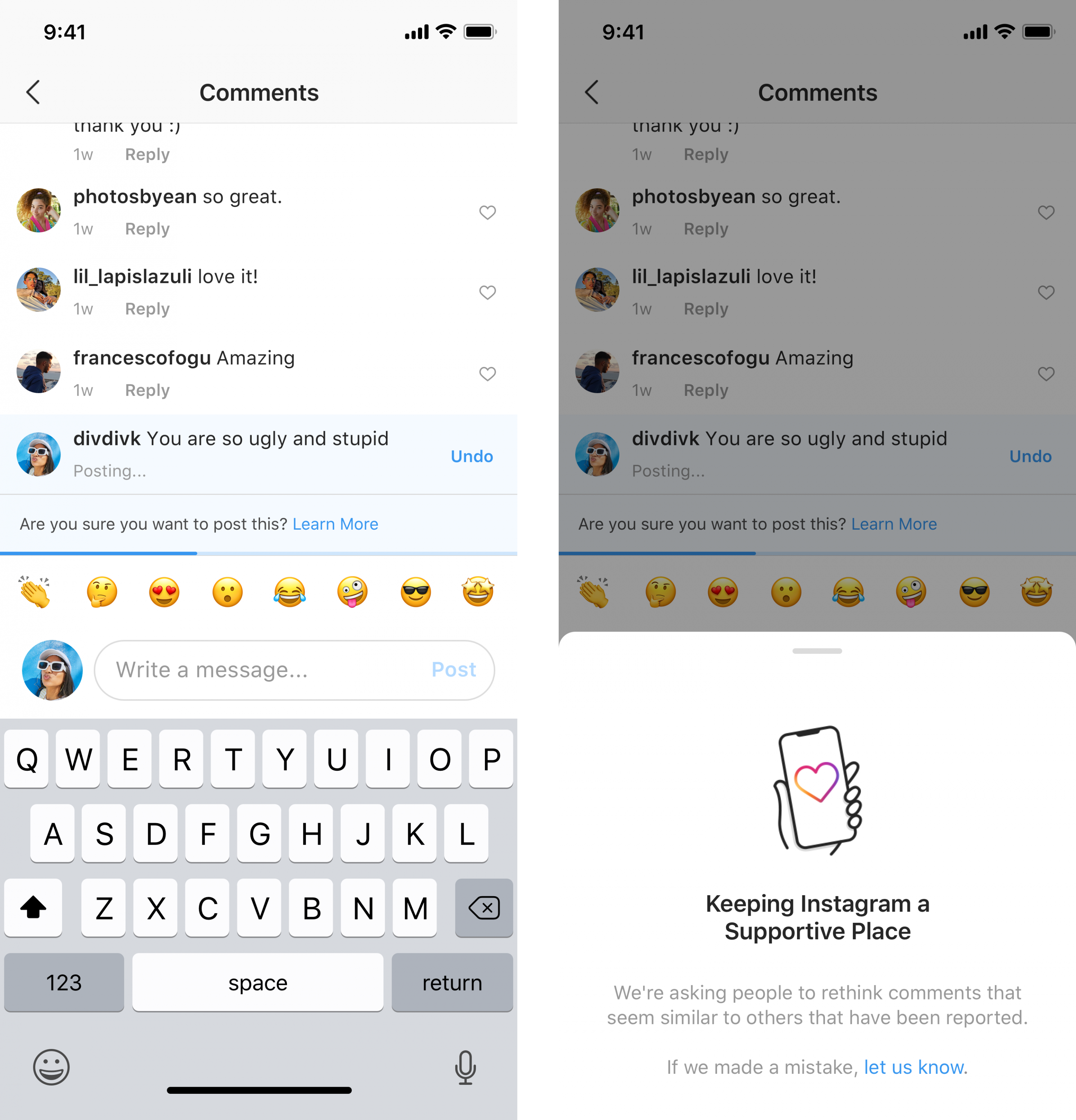

Instagram is now rolling out a new feature that uses artificial intelligence to flag inappropriate comments, while the social media platform is also trialing an added ability for users to “shadow ban” certain accounts from leaving public comments on some of their posts.

This comes as part of Instagram’s commitment to “lead the fight against online bullying”, and will give users the ability to restrict other users on their accounts without notifying them.

“Encouraging positive interactions”

This update has been rolled out in the past few days, and will essentially prompt a user if their comment is flagged as inappropriate—this gives the perpetrator the chance to reconsider their actions, although Instagram only reveals that only “some” people have actually re-worded their comment after being notified.

What is a shadow ban?

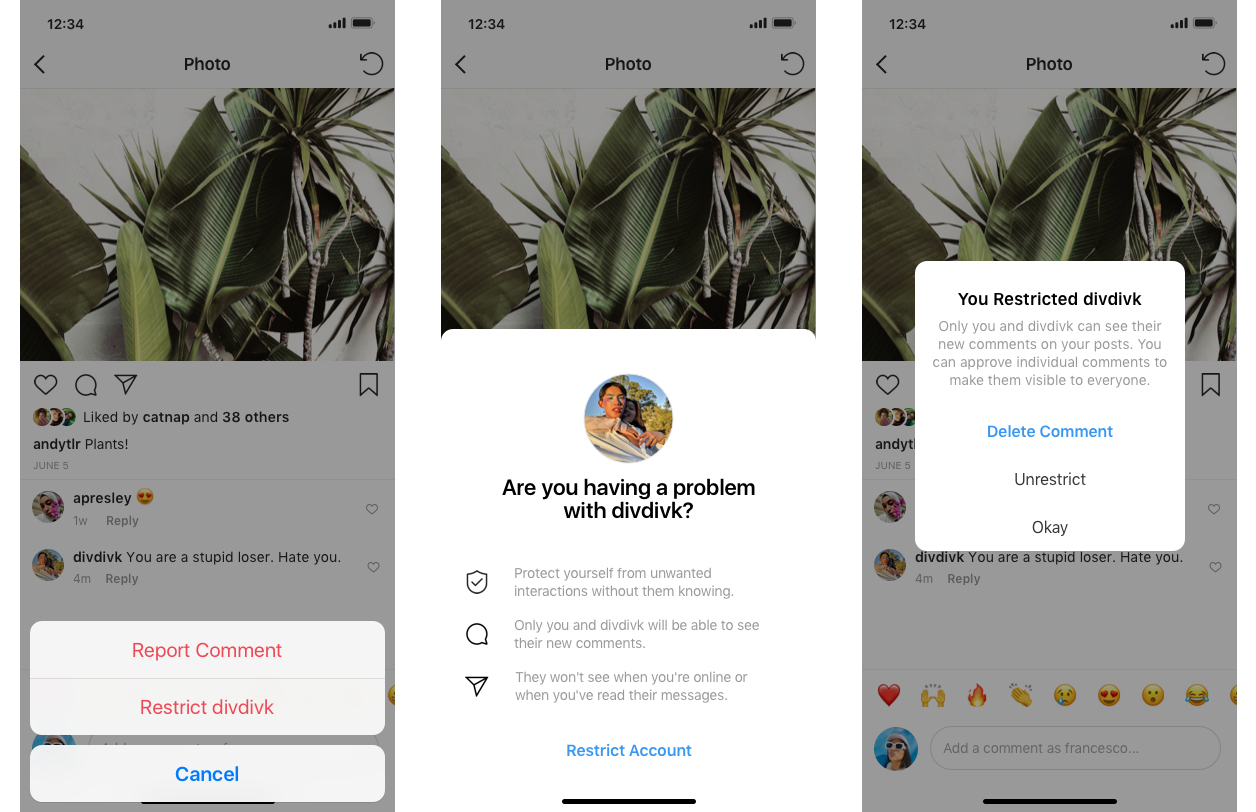

The “shadow ban” feature is currently being tested, and will give users the ability to restrict another user; comments from the restricted user will only be visible to themselves (the restricted account). The restricted user won’t be able to see when the account holder is online, or when they’ve “seen” a direct message.

According to an official statement from Instagram,

“We’ve heard from young people in our community that they’re reluctant to block, unfollow, or report their bully because it could escalate the situation, especially if they interact with their bully in real life. Some of these actions also make it difficult for a target to keep track of their bully’s behaviour.”

At the moment, it isn’t clear how effective these measures are. But it’s certainly a step in the right direction, with Instagram having already tested similar measures such as an offensive-comment filter. But the new features do offer something quite different—essentially, it’s a way to block bullies, without notifying them (and further provoking them).

Kudos, Instagram.

[ SOURCE ]